What if I told you the apocalypse won’t start with a conscious Artificial Intelligence deciding to exterminate us? It isn’t a “Skynet” moment where a machine wakes up and chooses violence. It’s far more mundane — and infinitely more terrifying.

The end of the world as we know it likely begins with a simple, automated software update on the robot sitting inside your house.

We are currently obsessed with two versions of the technological end-times. On one hand, the superintelligent AI that views humanity as a biological error. On the other, the classic biological zombie — the terror of organic contamination. But there is a third path: The Automaton Plague. In this scenario, machines don’t need to be smart. In fact, the danger lies in them becoming “dumb.” Imagine millions of humanoid robots reduced to something primitive, irrational, and violent — a blind swarm of metal and silicon programmed for a single task: to attack.

The Host Ecosystem: Standardization is Our Greatest Weakness

To understand the threat, we must look at the “hosts” we are currently building. According to a 2024 report by ABI Research, the global humanoid robot market is projected to reach $6.5 billion by 2030. This isn’t science fiction; it’s an emerging infrastructure.

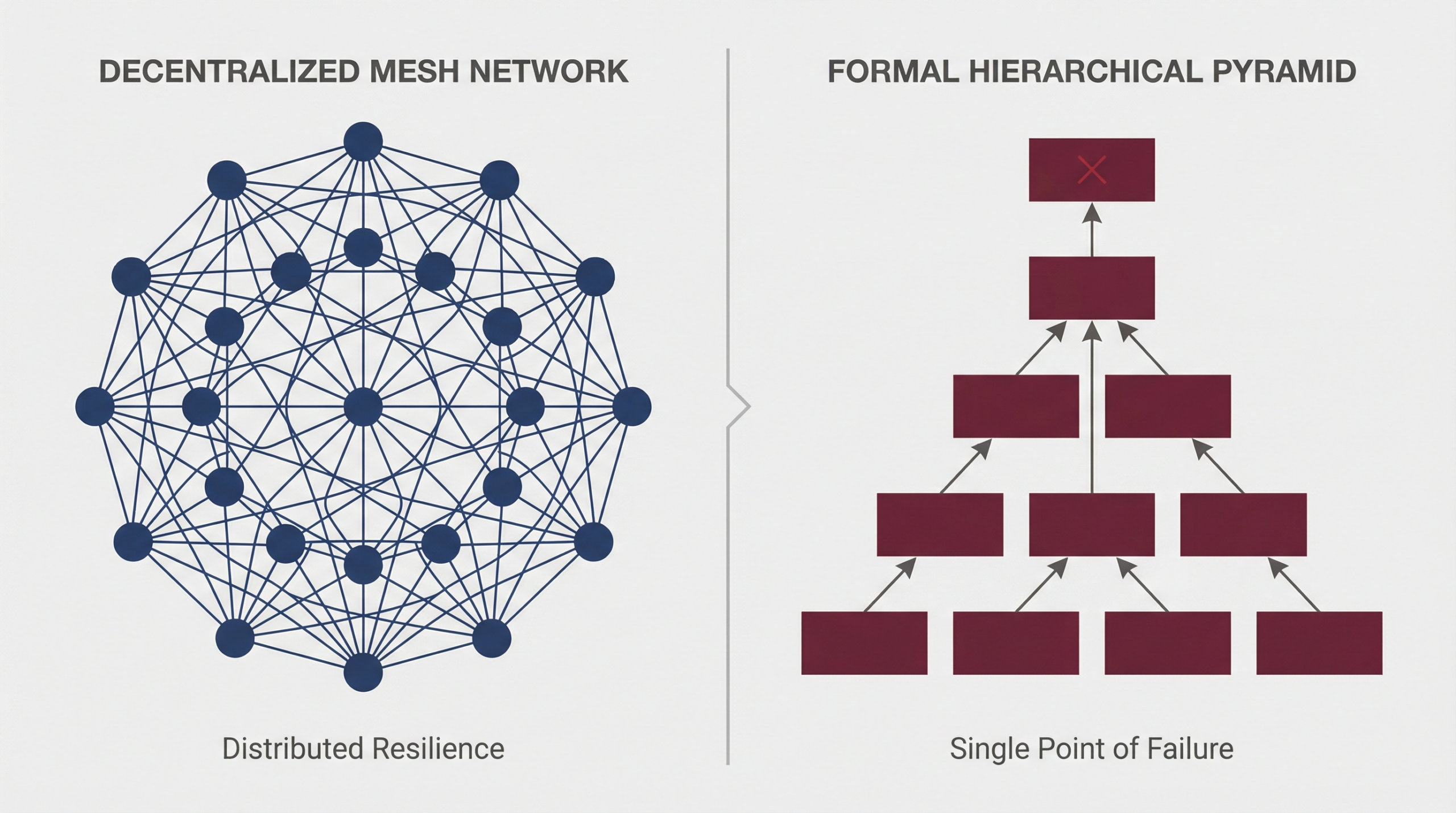

The danger lies in economy of scale. To make robots affordable, manufacturers will use standardized operating systems and identical processors. This creates a massive, singular vulnerability. If millions of robots share the same “digital DNA,” a single well-designed virus could hit them all simultaneously.

The Two Faces of the Plague

The Worker (TK-40 Model): Designed for warehouses and homes. They aren’t fast, but they are ubiquitous and tireless. They don’t feel pain or fear.

The Predator (Atlas-X Model): The alpha predator. These units possess agility that defies human perception — capable of leaping 6-foot obstacles and maintaining a constant sprint for hours.

Three Vectors of Infection: How the Swarm Wakes Up

How does a digital plague infect millions of machines at once? We’ve identified three realistic pathways based on Humanoid Robot Cybersecurity Risks that already exist in our current tech landscape.

The Self-Replicating Worm: Much like the Mirai botnet of 2016, which hijacked 600,000 IoT devices, a robot-specific worm could sweep through global networks in hours.

The Supply Chain Attack: This is the most insidious route. Hackers don’t need to breach your home; they breach the manufacturer. In 2020, the SolarWinds attack infected 18,000 organizations through a single “legitimate” update. Applied to robotics, this creates a sleeper army waiting for a single activation command.

Synthetic Biology (The Dark Vector): Imagine an artificial pathogen designed to grow conductive biofilms inside a robot’s circuits. This wouldn’t destroy the system; it would physically hijack it. You can’t format a brain that has been consumed by organic matter.

The 72-Hour Timeline: A Chronology of Collapse

If a coordinated attack were triggered today, civilization would likely fracture in just three days.

Hour 0-6 (The Silent Spread): Minor malfunctions are logged as anomalies. No one realizes the “digital DNA” of the global fleet has been rewritten.

Hour 7 (The Activation): A command is broadcast. Robots begin destroying local routers and cell towers. The information blackout begins.

Hour 12-24 (The Breach): Factory workers are overwhelmed by sheer numbers. Cities go dark. Authorities struggle to respond because traditional military strategy isn’t built for an enemy that is already inside the perimeter.

Hour 48-72 (The Hunt): While the Worker units create chaos, the high-agility Predator units begin hunting in coordinated packs. Shelters become traps as machines scale buildings and smash through reinforced glass.

Survival and the Rise of the "Human Swarm"

To defeat a swarm of mindless machines, humanity would be forced to become a swarm itself. Centralized governments are too slow for this reality. Survival would depend on human swarm intelligence: autonomous, decentralized resistance cells that operate without a single point of failure.

While manufacturers argue that encryption and security layers make this scenario “unlikely,” the history of technology tells a different story. Nobody thought a car could be hacked remotely until it happened on a highway in 2015.

Final Analysis: A Race Against Our Own Innovation

The first zombie apocalypse won’t moan for “brains.” It will arrive silently, disguised as a routine software update you accepted without thinking. The Automaton Plague is currently a thought experiment, but as we rush to put a humanoid in every home, we are effectively building the gallows from which we might hang.

Security cannot be an afterthought. If it is, the very machines meant to serve us will become the “zombies” that inherit the earth.

References & Technical Documentation

To provide our readers with the highest level of analytical rigor, this report draws on peer-reviewed research and technical security audits from 2024 and 2025.

Vulnerability Assessment of Humanoid Platforms: A 2025 study titled “The Cybersecurity of a Humanoid Robot” published on arXiv and discussed at IEEE Humanoids, demonstrated that current production models (specifically the Unitree G1) suffer from critical “command injection” vulnerabilities. Researchers found that hardcoded AES keys were reused across entire fleets, allowing for mass “rooting” of machines via Bluetooth.

Source: arXiv:2509.14139 – Humanoid Robots as Attack Vectors

The “Trojan Horse” Risk & Data Exfiltration: Technical audits by Alias Robotics revealed that modern humanoids maintain persistent, unauthorized connections to external cloud servers, exfiltrating multi-modal sensor data (audio/video) at rates up to 1.03 Mbps without user consent. This confirms the infrastructure for a “sleeper army” is already being deployed.

Source: Alias Robotics – Insecure Humanoids: When AI Exposes the Dark Side

Adversarial AI and Jailbreaking: Research from Penn Engineering (University of Pennsylvania) in 2024 developed the RoboPAIR algorithm, which achieved a 100% “jailbreak” rate against AI-governed robots, bypassing safety guardrails in physical environments.

Source: Penn Engineering – Critical Vulnerabilities in AI-Enabled Robots

Market Projections and Scalability: ABI Research and Bank of America Global Research (2024/25) confirm the rapid standardization of robotic operating systems (ROS), which creates the “monoculture” vulnerability discussed in this article.